At GTC, Nvidia announced the Groq 3 LPU chip, which uses tech licensed from the AI company Groq. The LPU was part of seven upcoming data center chips intended to supercharge AI.

To improve chatbot performance, Nvidia plans to sell a new kind of processor, an LPU, optimized to run large language models (LLMs).

The “Nvidia Groq 3 LPU” chip was among seven upcoming chips Nvidia touted at the company’s annual GTC event, where it pitched the AI industry on why Nvidia’s chips continue to lead.

The LPU, or Language Processing Unit, comes from Nvidia’s deal this past December to license technology from a California AI company called Groq (not to be confused with the AI chatbot Grok from xAI). Founded in 2016. Groq issued earlier LPU chips specifically designed for LLMs to offer faster speeds and energy efficiency. The aim: To create an alternative to Nvidia’s enterprise GPUs, which can be used for a wider range of AI workloads.

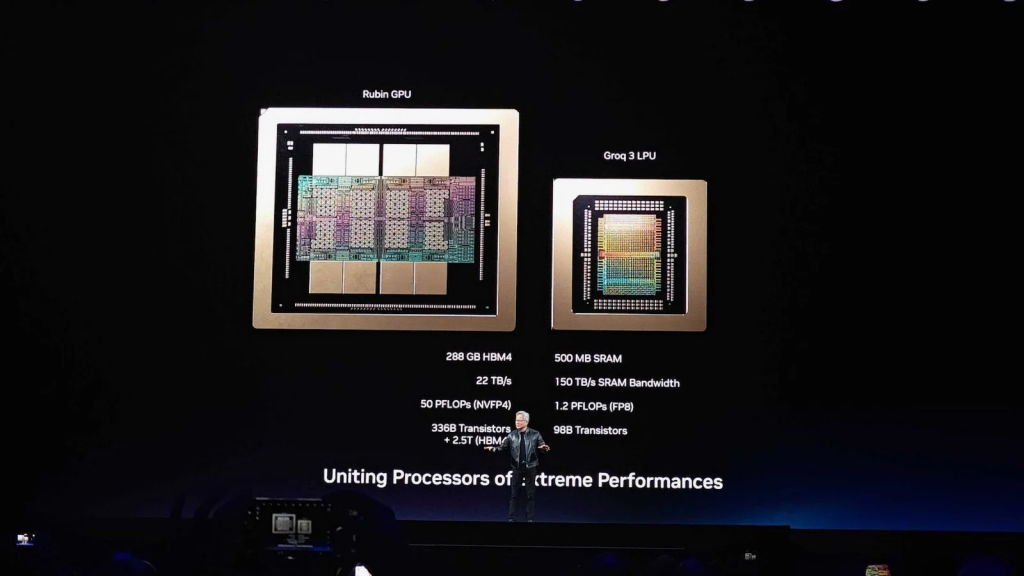

Groq’s LPU chips use even faster SRAM (static RAM), instead of HBM (high-bandwidth memory) typically found on Nvidia’s GPUs. But on the downside, Groq’s LPUs can only offer “hundreds of megabytes” in SRAM, whereas HBM memory can span over a hundred gigabytes or more per chip.

That’s why a single Groq 3 LPU only contains 500MB of SRAM, while Nvidia’s upcoming Rubin GPU will feature 288GB of HBM4 memory. To compensate for the lower memory capacity, Nvidia is preparing to sell large batches of LPUs to work alongside the rest of its data center chips, giving AI companies a way to squeeze out even more performance.

相关推荐

- Apple Launches AirPods Max 2 With Improved ANC, Live Translation Support

- This Windows 11 Feature Can Fill Your OneDrive Storage Without You Realizing

- Meta Reportedly Plans to Lay Off 20% of Its Staff Amid AI Push

- Spotify to Test a Feature That Lets You Tweak Homepage Song Recommendations

- Nvidia Opens Orders for DGX Station, Ready to Run Giant AI Models at Your Desk

站内搜索

相关资讯

Copyright © 逆传播-newswires-All Rights Reserved 粤ICP备18027777号