Also at GTC, the company unveils NemoClaw, its toolkit for running the fast-rising, open-source OpenClaw AI agent locally.

Following a delay, Nvidia is ready to release the DGX Station, a desktop-like computer that can run AI models locally, bringing AI data center-like performance to your home or office.

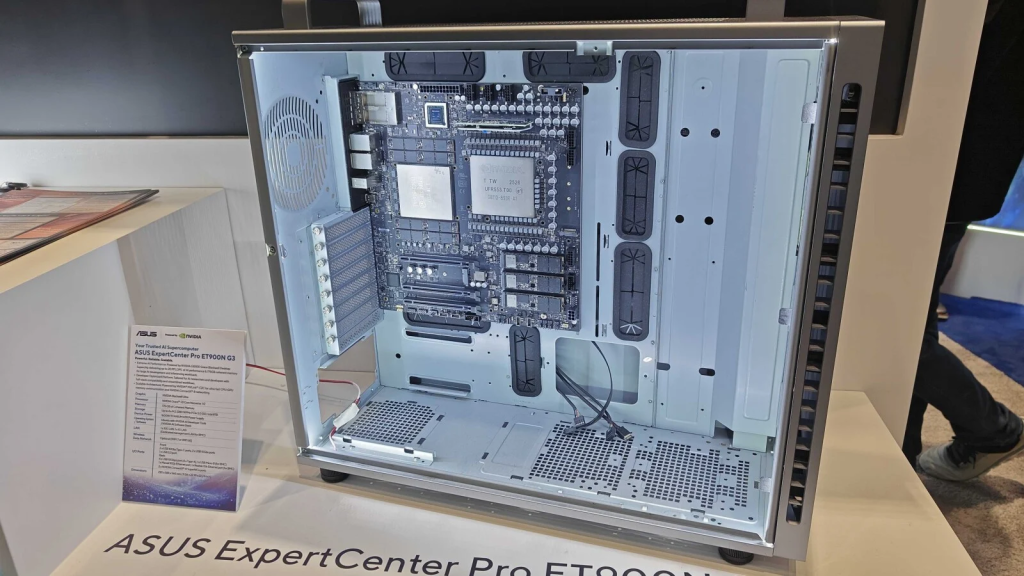

At the company’s annual GTC event, Nvidia revealed that PC manufacturers are starting to accept orders for their DGX Station units. The company’s website offers a catalog of the six upcoming models from six companies: Asus, Dell, HP, Gigabyte, MSI, and server provider Supermicro.

All the units come with their own names and are packed in desktop tower cases. However, pricing is unclear. The vendors are asking interested customers to fill out a form with their contact information. The companies will then follow up with more details. Nvidia also declined to reveal pricing, but told journalists in a briefing that buyers can expect the DGX station units to begin shipping out within weeks.

DGX Station: A Pumped-Up Spark

Introduced a year ago, the DGX Station is basically the more powerful big brother of the DGX Spark, a $4.000 mini PC that can also run AI models locally. The portable DGX Spark features a smaller GB10 Grace Blackwell chip and 128GB of RAM, enabling it to run AI models with up to 200 billion parameters.

In contrast, the DGX Station features a larger Nvidia GB300 chip and a staggering 748GB of “coherent memory” shared between the processing and graphics portions. Nvidia says the computing power is enough to run even larger, more advanced AI models that span up to 1 trillion parameters.

But like DGX Spark, the larger DGX Station is almost certainly going to be expensive and is being advertised toward enterprises. The DGX Station was supposed to arrive last year, but it was pushed back to this spring. In a press briefing, Nvidia indicated that packing the GB300 chip and its motherboard into a desktop case was a challenge that required more time.

相关推荐

- Apple Launches AirPods Max 2 With Improved ANC, Live Translation Support

- This Windows 11 Feature Can Fill Your OneDrive Storage Without You Realizing

- Meta Reportedly Plans to Lay Off 20% of Its Staff Amid AI Push

- Spotify to Test a Feature That Lets You Tweak Homepage Song Recommendations

- Nvidia to Upgrade AI Chatbot Performance With New ‘LPU’ Chip

Copyright © 逆传播-newswires-All Rights Reserved 粤ICP备18027777号